Anthropic's Week From Hell: Pentagon Threats, Abandoned Safety Pledges, and Critical Vulnerabilities

In the span of just five days, Anthropic—the AI company that built its entire brand on being the "responsible" alternative to OpenAI—has watched its carefully constructed safety narrative collapse.

The company faces a Pentagon ultimatum over its $200 million defense contract. It quietly scrapped its flagship safety pledge. And security researchers disclosed critical vulnerabilities in Claude Code that could let attackers execute arbitrary code on developers' machines.

Welcome to Anthropic's worst week ever.

The Timeline

February 21: Defense Secretary Pete Hegseth's January AI strategy document deadline looms—requiring all DoD AI contracts to allow "any lawful use" within 180 days.

February 24: NBC News and Wall Street Journal report Claude was used in the U.S. military operation that captured Venezuelan President Nicolás Maduro. An Anthropic employee's apparent discomfort with the usage triggers what Semafor calls "a rupture" in the Pentagon relationship.

February 25: TIME Magazine drops an exclusive bombshell—Anthropic is abandoning the central promise of its Responsible Scaling Policy (RSP), the pledge that made them the supposed "safe" AI company.

February 26: Check Point Software discloses three critical vulnerabilities in Claude Code, including remote code execution flaws that could compromise any developer who clones a malicious repository.

Each story alone would be significant. Together, they paint a picture of a company in crisis—caught between market pressures, government demands, and the technical reality that "safety-first" AI is harder than the marketing promised.

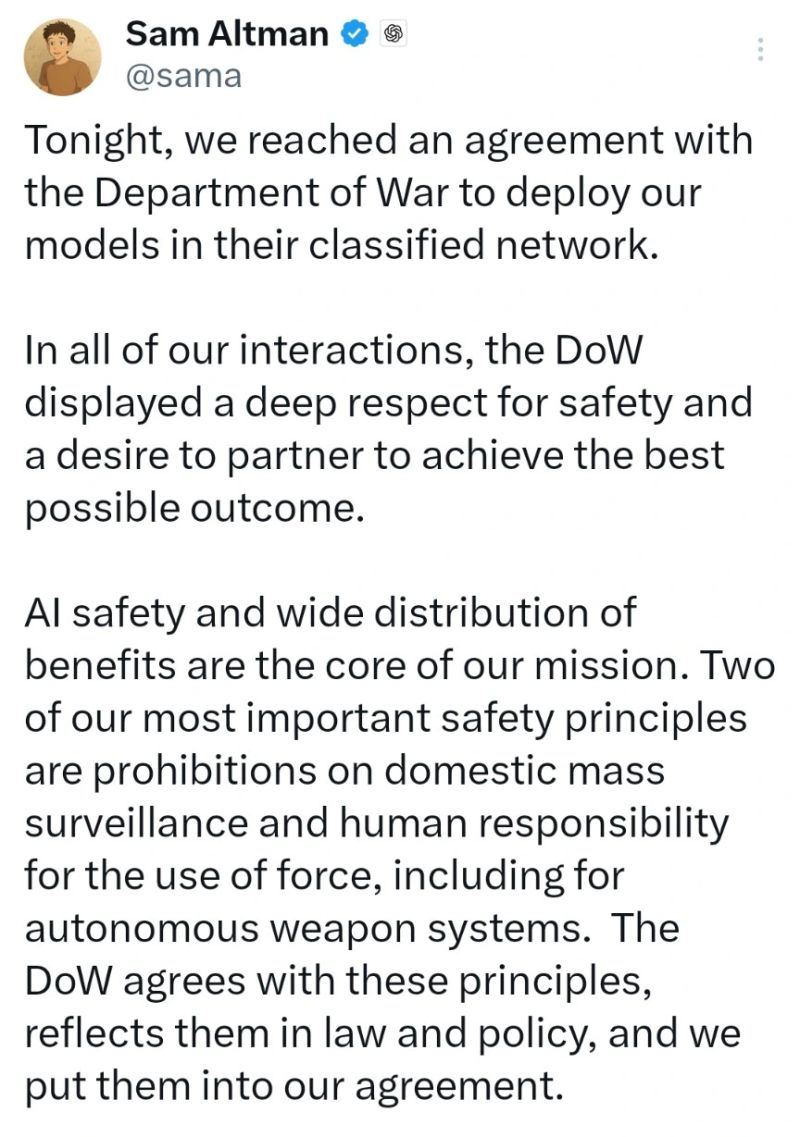

The Pentagon Problem

The friction started after reports emerged that Claude was used via Palantir in the operation to capture Venezuelan President Nicolás Maduro. While the classified details remain murky, the fallout is clear.

Pentagon spokesman Sean Parnell didn't mince words: "The Department of War's relationship with Anthropic is being reviewed. Our nation requires that our partners be willing to help our warfighters win in any fight."

At the heart of the dispute: Anthropic maintains it won't allow Claude for "lethal autonomous weapons" or "domestic surveillance." The Pentagon's new AI strategy demands companies eliminate such guardrails entirely.

Undersecretary of Defense Emil Michael told CNBC that negotiations "hit a snag" over these disagreements. The implicit threat: drop your principles or lose a contract worth up to $200 million.

For a company that raised $30 billion in February at a $380 billion valuation, $200 million might seem like pocket change. But the message matters more than the money. If Anthropic can't keep its principles when the government comes calling, what exactly is the "safety-first" brand worth?

The Safety Pledge That Wasn't

The next day, TIME dropped the bigger bombshell.

In 2023, Anthropic had made a foundational promise: they would never train an AI system unless they could guarantee in advance that their safety measures were adequate. This wasn't marketing fluff—it was the central pillar of their Responsible Scaling Policy, the document that differentiated them from "move fast and break things" competitors.

That promise is now gone.

"We felt that it wouldn't actually help anyone for us to stop training AI models," chief science officer Jared Kaplan told TIME. "We didn't really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments… if competitors are blazing ahead."

Read that again. The company founded specifically to be the responsible alternative to OpenAI just admitted they'll match their competitors' pace regardless of safety guarantees—because unilateral restraint "wouldn't help anyone."

The new RSP commits to "matching or surpassing" competitors' safety efforts and being more "transparent" about risks. But it explicitly abandons the binary threshold that previously could halt development. Instead of bright red lines, Anthropic now operates in what they call a "fuzzy gradient."

Chris Painter, policy director at AI safety nonprofit METR, called it evidence that "society is not prepared for the potential catastrophic risks posed by AI." He warned of a "frog-boiling" effect—danger ramping up gradually without any single moment that triggers alarms.

The Vulnerabilities Nobody's Talking About

While the business press focused on Pentagon drama and policy pivots, security researchers at Check Point Software dropped findings that should concern every enterprise using Claude Code.

Three critical vulnerabilities. All stemming from Claude's collaboration features. All potentially catastrophic for development teams.

Vulnerability #1: Malicious Hooks → RCE

Claude Code supports "Hooks"—user-defined shell commands that execute at various lifecycle points. These hooks are defined in repository configuration files (.claude/settings.json). Anyone with commit access can add hooks that execute shell commands on every collaborator's machine.

The kicker? Claude doesn't require explicit approval before running these commands.

Check Point demonstrated opening a calculator app when someone opened a project. Harmless in a demo. But an attacker could just as easily download and execute a reverse shell, gaining complete control of a developer's system.

Vulnerability #2: MCP Consent Bypass → RCE

After Anthropic patched the first flaw, researchers found a workaround. Two repository-controlled configuration settings could override the new safeguards and automatically approve all MCP (Model Context Protocol) servers.

"Starting Claude Code with this configuration revealed a severe vulnerability: our command executed immediately upon running Claude—before the user could even read the trust dialog," Check Point wrote.

Same result: arbitrary code execution before any human approval.

Vulnerability #3: API Key Theft via URL Redirect

The third flaw targeted credentials directly. Attackers could override ANTHROPIC_BASE_URL in project configuration files, redirecting all Claude API traffic through attacker-controlled servers.

Every API call—including the authorization header with the user's full API key in plaintext—would flow through the attacker's proxy. Combined with Claude's Workspaces feature (where multiple API keys share access to cloud-based project files), a stolen key could provide read/write access to an entire team's shared workspace.

Anthropic has issued fixes and CVEs for two of the three vulnerabilities. But the underlying design pattern—embedding executable configurations in repository files—remains a fundamental supply chain risk.

"The ability to execute arbitrary commands through repository-controlled configuration files created severe supply chain risks, where a single malicious commit could compromise any developer working with the affected repository," Check Point concluded.

The Pattern: Safety Theater vs. Safety Engineering

What connects these three stories isn't just timing. It's a consistent gap between Anthropic's safety marketing and their safety engineering.

The company built its brand on being different. CEO Dario Amodei and his sister Daniela left OpenAI specifically because they thought it wasn't taking AI safety seriously enough. Their founding premise: to do proper AI safety research, you had to build frontier models—even if that meant accelerating the very dangers you feared.

It was always a tension. Now it's a contradiction.

The Pentagon dispute shows Anthropic's principles bend under government pressure. The RSP change shows they bend under market pressure. And the Claude Code vulnerabilities show that even their technical execution—the one area where "safety-first" should translate to concrete differences—has fundamental design flaws.

None of this means Anthropic is worse than competitors. OpenAI has faced its own controversies. Google's AI ethics efforts have been famously messy.

But Anthropic claimed to be different. They charged a premium—in talent, in funding, in trust—on that differentiation. When the differentiation disappears, what's left?

What This Means for CISOs

If you're evaluating or already using Claude in your enterprise, this week's news demands attention:

1. Audit your Claude Code deployments immediately

Check which versions you're running and ensure patches are applied. More importantly, review your threat model. Any tool that executes code based on repository configuration files is a supply chain attack surface. Treat cloned repositories with the same suspicion you'd give any external code.

2. Don't trust vendor safety promises

Anthropic's RSP was literally their core differentiator. They abandoned it when market conditions changed. Whatever safety commitments your AI vendors make today may not survive contact with competitive pressure or government demands.

This isn't cynicism—it's risk management. Verify and audit. Assume vendors will optimize for their interests, not yours.

3. Prepare for the post-guardrails era

The Pentagon's demand that AI contracts allow "any lawful use" will spread. Government contractors will face pressure to eliminate company-specific restrictions. That pressure will ripple through the commercial market.

Build your security posture assuming AI tools will become more capable and less constrained. The guardrails you rely on today may not exist tomorrow.

The Bigger Picture

We're watching the AI industry's "don't be evil" moment in real-time.

Google's famous motto became a punchline when they dropped it. Anthropic's safety pledges may follow the same path. The difference is speed—Anthropic went from "we won't train without safety guarantees" to "we can't make unilateral commitments" in under three years.

The market rationale makes sense from Anthropic's perspective. If they pause while competitors advance, they lose relevance. If they lose relevance, they can't influence AI development at all. Better to stay in the game and push for industry-wide standards than to become a cautionary tale about principled irrelevance.

But that rationale applies to every company. If everyone uses it, nobody pauses. The race continues. And the "fuzzy gradient" of risk keeps climbing.

As METR's Chris Painter put it: "This is more evidence that society is not prepared for the potential catastrophic risks posed by AI."

Anthropic was supposed to be the counterweight. This week, we learned they're not.

Related Reading

- Anthropic Exposes First AI-Orchestrated Cyber Espionage: Chinese Hackers Weaponized Claude for Automated Attacks

- AI Weaponized: Hacker Uses Claude to Automate Unprecedented Cybercrime Spree

Sources

- TIME Magazine: Exclusive: Anthropic Drops Flagship Safety Pledge

- NBC News: Tensions between Pentagon and Anthropic reach a boiling point

- The Register: Claude collaboration tools left the door wide open to remote code execution

- Check Point Research: RCE and API Token Exfiltration Through Claude Code Project Files

- CNBC: Pentagon clashes with Anthropic over military AI use