Banned at Dawn, Deployed by Dusk: The U.S. Used Anthropic's Claude in the Iran Strikes — Hours After Trump Banned It

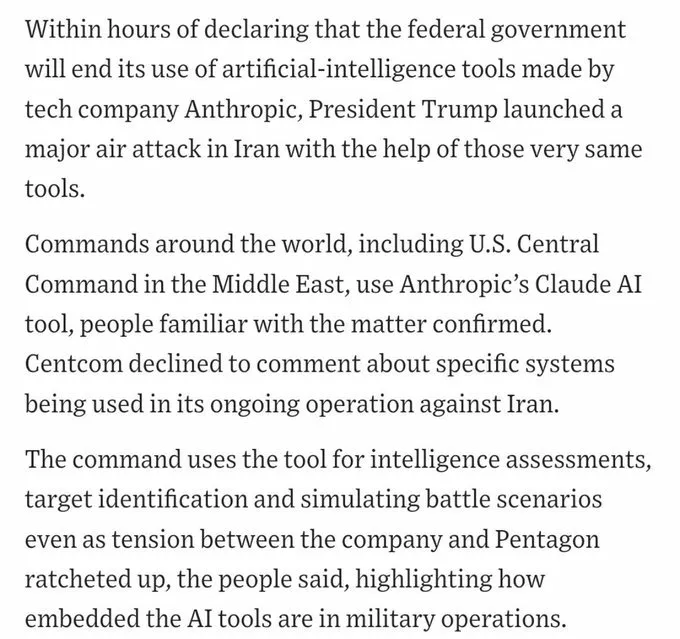

The Wall Street Journal confirmed it. The U.S. military used Anthropic's Claude AI for intelligence analysis, target identification, and battle scenario simulation during strikes on Iran on February 28, 2026 — less than 12 hours after President Trump ordered all federal agencies to immediately stop using the technology.

What Happened — The Timeline

Here is the sequence, stripped of spin:

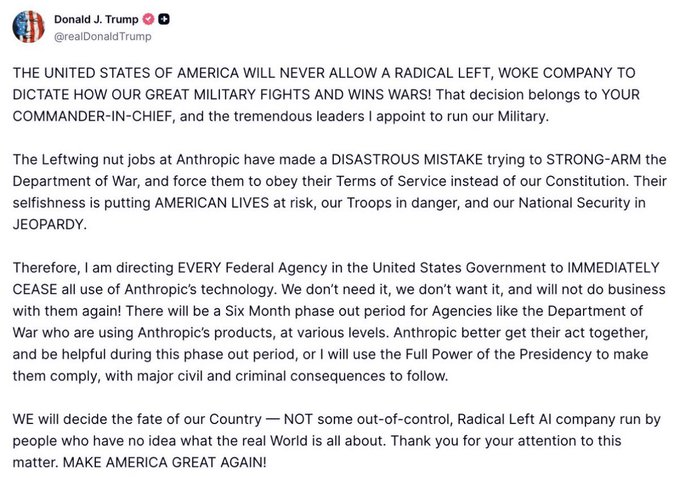

February 27, 2026 — Morning: Trump posts on Truth Social calling Anthropic a "radical left, woke company" and orders all federal agencies to immediately cease using Anthropic's technology. A 6-month phase-out window is granted for agencies currently relying on it.

February 27, 2026 — Same day: Defense Secretary Pete Hegseth formally designates Anthropic a "supply-chain risk to national security" under 10 USC 3252.

February 28, 2026 — Night: The U.S. and Israel launch coordinated strikes on Iran. Targets are hit across 24 of Iran's 31 provinces. Iran's Supreme Leader Ayatollah Ali Khamenei is killed. Over 200 people are killed, 700+ injured according to the Red Crescent.

February 28, 2026 — Same night, per WSJ: U.S. Central Command (CENTCOM) was using Claude during the operation for intelligence assessments, target identification, and simulating battle scenarios.

The same AI the government publicly labeled a national security risk was running on classified networks while the bombs were falling.

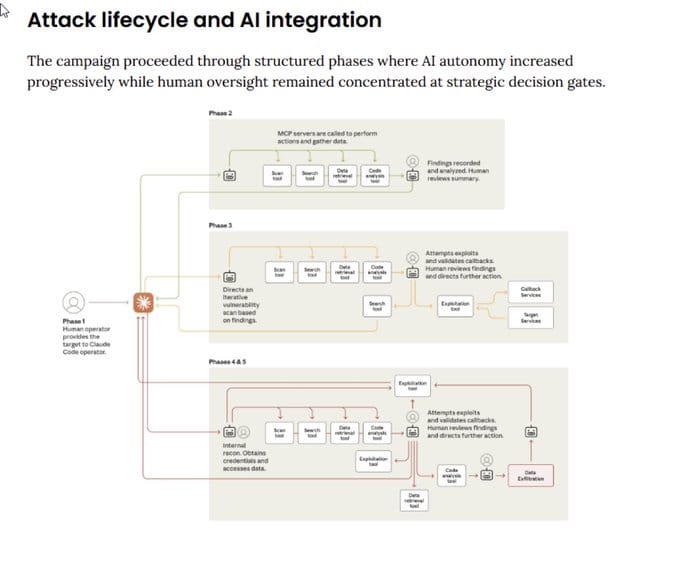

What Claude Was Actually Doing

Claude was not pulling triggers. But it was doing the cognitive work that shapes who gets targeted and how.

Per the WSJ and sources familiar with the operation, CENTCOM used Claude for:

- Intelligence Assessments — Processing satellite imagery, intercepted communications, and signals intelligence at machine speed, generating threat summaries and situational awareness reports for human commanders.

- Target Identification — Cross-referencing intelligence streams to surface and vet potential strike targets. Human commanders made final calls, but Claude was doing the analytical legwork.

- Battle Scenario Simulation — Running "what-if" models: if we strike here, how does the adversary likely respond? Stress-testing tactical decisions before execution.

This is not experimental. This is operational. And it is not the first time — Claude was also reportedly used in the January 2026 operation that led to the capture of Venezuelan President Nicolás Maduro in Caracas.

Why It Was "Technically Legal" — And Why That Doesn't Close the Question

Per Axios, as confirmed by Cybernews: technically, this wasn't illegal.

The 6-month phase-out window exists specifically because systems this deeply integrated can't be safely disconnected overnight. Claude was still cleared for use on classified military networks during the transition period. The ban was announced. The phase-out clock started. The military kept using it.

So: not illegal. Just deeply, historically ironic.

But the legal technicality doesn't resolve the harder question: when AI is embedded in the kill chain and the vendor is simultaneously fighting a government ban in court, who is accountable for what the AI does during the gap?

There's no clean answer to that right now.

Why Anthropic Got Banned — The Guardrails They Refused to Drop

The conflict didn't emerge from nowhere. It escalated over months through a specific disagreement.

The Pentagon, under Hegseth, demanded unrestricted use of Claude for any operation deemed "lawful" under military protocols. That meant removing the two core safeguards Anthropic had built into Claude:

- No mass domestic surveillance of American citizens

- No autonomous lethal weapons systems — AI that selects and engages targets without meaningful human authorization

Anthropic CEO Dario Amodei refused. The company's public statement: "No amount of intimidation or punishment from the Department of War will change our position."

The Pentagon responded by labeling them a supply-chain security risk — an unprecedented designation for a U.S.-based AI company. Anthropic immediately said it would challenge the designation in court, noting that under 10 USC 3252, the designation can only affect Claude's use under DoD contracts — "your use for any other purpose is unaffected."

They're suing. That won't bring anyone back. But it's the only lever they have.

How Claude Got This Deep — A Brief History

Claude didn't end up in classified military systems by accident:

- November 2024: Anthropic partners with Palantir Technologies and Amazon Web Services to deliver Claude into U.S. defense and intelligence systems, including classified environments.

- June 2025: Anthropic launches Claude Gov — purpose-built for government and national security workflows with sovereign deployment options.

- July 2025: The DoD awards AI contracts worth up to $200 million to Anthropic, OpenAI, Google, and xAI for frontier AI prototyping across warfighting and intelligence use cases.

- Late 2025–Early 2026: Claude becomes the only AI model fully approved and deployed within U.S. military classified systems at scale.

No other frontier model had cleared the same security and integration requirements. That's why you can't just swap it out. That's why there's a 6-month window. The dependency was real.

What Comes Next — The Replacement Race

Within hours of the Anthropic ban, the Pentagon moved:

- OpenAI announced a new classified network access agreement — with safety guardrails against mass surveillance and autonomous weapons. The same limits Anthropic maintained. Make of that what you will.

- xAI (Elon Musk) — integration into military systems was already in progress. Musk's political alignment makes this frictionless for the current administration.

- Google — Gemini for Government is cleared for unclassified use through the GenAI.mil platform. Classified system negotiations are ongoing.

Military officials and AI experts have stated clearly: fully replacing Claude could take months. The integration runs deep through Palantir and AWS GovCloud and cannot be hot-swapped without operational risk.

The 6-month window is not symbolic. It is an admission that U.S. military capability is now partially dependent on commercial AI infrastructure that the government no longer politically controls.

The Questions This Raises

Vendor concentration risk: When a single commercial AI vendor becomes the only approved system on classified military networks, losing that vendor creates an immediate operational gap. This is the same risk security architects have warned about in enterprise IT for decades. Except here the stakes are kinetic.

Accountability in AI-assisted targeting: Claude helped identify targets. Humans approved the strikes. When AI is doing the analytical work of narrowing and vetting who gets hit — and those targets are subsequently struck — the accountability chain becomes genuinely unclear. The legal frameworks haven't caught up with the operational reality.

The guardrails paradox: Anthropic held its ethical line and was punished for it. Those limits existed precisely to prevent AI from enabling potential war crimes or civil liberties violations. The question is whether OpenAI, xAI, and Google will hold the same line under the same pressure — or whether competitive pressure erodes it.

The adversary precedent: The Iran strikes are now public record. China and Russia have confirmation that commercial AI is an operational component of U.S. strike packages. Expect accelerated parallel development.

The Bottom Line

They banned the company. They needed the technology. So they used it anyway.

The guardrails held — this time. Anthropic refused to let Claude become a tool for autonomous killing or mass surveillance of Americans. They paid for that with a government contract and an unprecedented national security designation.

But the military kept using Claude through the phase-out window, including in the strikes that killed Iran's Supreme Leader.

AI warfare is not a future scenario. It is not a hypothetical. It is documented, confirmed, and already operational as of February 28, 2026.

The only question now is: when the replacement systems are in place, will whoever controls them hold the same line Anthropic did?

Sources: Wall Street Journal, Cybernews, IBTimes UK, Outlook Business, Blockonomi, WION, Axios. Reporting by QSai LLC / CISO Marketplace Intelligence Desk.

Further reading from our network:

- Anthropic Exposes First AI-Orchestrated Cyber Espionage: Chinese Hackers Weaponized Claude

- AI Weaponized: Hacker Uses Claude to Automate Unprecedented Cybercrime Spree

- The Ghost in the Machine: Psyops and 5th-Gen Warfare in the AI Era

- The Unseen Frontlines: AI Incidents, Disinformation, and Cyber Espionage