CISA Issues Insider Threat Warning Days After Its Own Director Uploaded Secrets to ChatGPT

Executive Summary

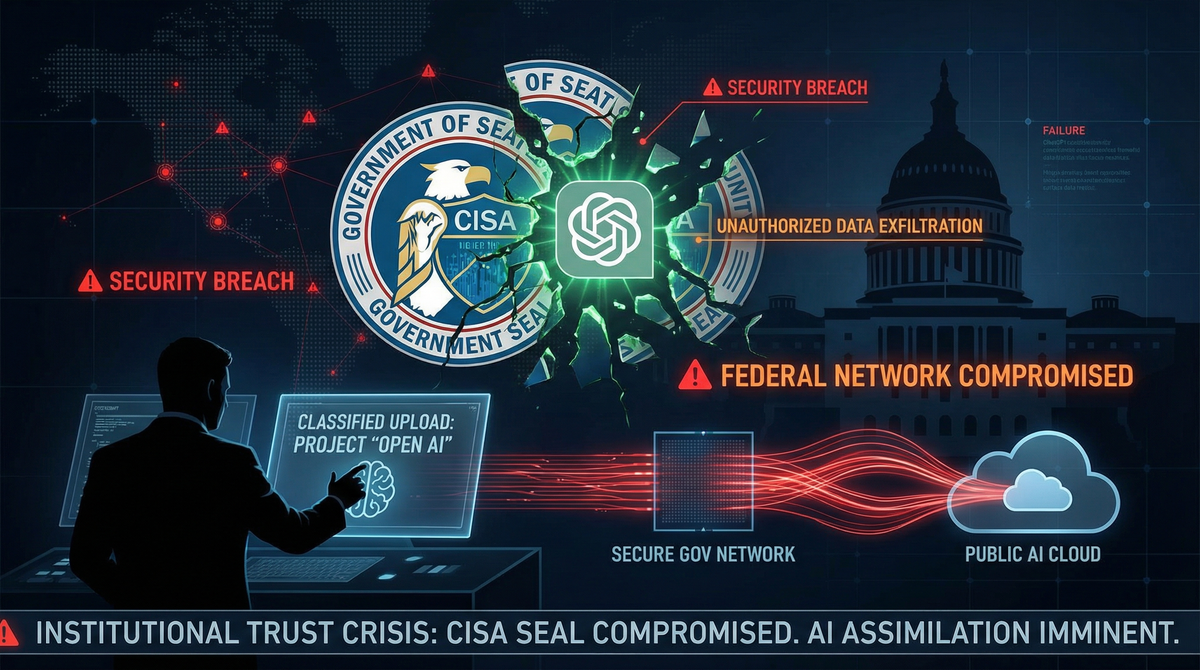

On January 29, 2026, the U.S. Cybersecurity and Infrastructure Security Agency (CISA) published guidance urging critical infrastructure organizations to "take decisive action" against insider threats—calling them "one of the most serious risks to organizational security." The timing could not have been more ironic: just 24 hours earlier, Politico had exposed that CISA's own acting director, Dr. Madhu Gottumukkala, had uploaded at least four sensitive government contracting documents to a public version of ChatGPT, triggering automated security warnings designed to prevent exactly this type of data exfiltration.

The scandal goes deeper. Gottumukkala failed a counterintelligence polygraph test in late July 2025, then placed six career staffers on administrative leave after they administered the exam. He also attempted to oust CISA's Chief Information Officer Robert Costello in January 2026. These revelations come amid a broader pattern of federal cybersecurity leadership violating the very security protocols they're supposed to enforce—including Defense Secretary Pete Hegseth's use of insecure Signal connections for military operations and National Security Advisor Michael Waltz using personal Gmail accounts to coordinate sensitive national security matters.

Why This Matters to CISOs: This incident perfectly illustrates the emerging threat vector that keeps security executives awake at night: employees—including senior leadership—inadvertently (or deliberately) exposing sensitive data through AI tools like ChatGPT, Claude, and other large language models. It demonstrates that technical controls alone are insufficient when leadership culture doesn't prioritize security, and it provides a case study in how automated monitoring systems can detect insider threats in real-time. Most critically, it reveals the enforcement gap between federal cybersecurity guidance and federal cybersecurity practice—a gap that undermines trust in government security leadership at the worst possible moment.

Timeline of Events: When Hypocrisy Met Irony

Summer 2025: The Setup

June 2025: Gottumukkala requests access to a Controlled Access Program (CAP), a highly sensitive cyber intelligence classification that requires passing a counterintelligence polygraph examination.

Mid-July 2025: Gottumukkala receives special authorization from CISA's Office of the Chief Information Officer to use ChatGPT, despite the Department of Homeland Security blocking access to the AI chatbot by default for all other employees. According to CISA spokesperson Marci McCarthy, this "temporary exception" was granted to "some employees" for short-term use. Gottumukkala uses ChatGPT to process government contracting documents during this period. His last documented use of the platform occurs in mid-July 2025.

Late July 2025: Gottumukkala fails his counterintelligence polygraph examination. The specific questions that triggered deception indicators have not been publicly disclosed, but the failure raises red flags about his access to sensitive intelligence.

August 2025: DHS cybersecurity monitoring systems—the same automated tools designed to prevent data theft and insider threats—detect that Gottumukkala uploaded at least four documents marked "For Official Use Only" (FOUO) to ChatGPT's public instance. The uploads trigger multiple security warnings during the first week of August alone. While FOUO documents are not classified, they contain sensitive information not authorized for public release, including contracting details, internal processes, and potentially operational information.

Senior DHS leadership initiates an internal review to assess potential risks to government security. Gottumukkala meets with then-acting DHS General Counsel Joseph Mazzara and DHS Chief Information Officer Antoine McCord to discuss the breach. He also holds meetings with CISA CIO Robert Costello and Chief Counsel Spencer Fisher regarding the proper handling of sensitive material.

August-December 2025: The Department of Homeland Security launches an investigation into the circumstances surrounding Gottumukkala's failed polygraph. In a move that former CISA employees describe as retaliatory, Gottumukkala places the six career staffers who administered the polygraph exam on paid administrative leave. DHS officials tell the suspended employees that the polygraph "did not need to be administered"—a claim that raises questions about whether Gottumukkala used his position to punish those who exposed his counterintelligence vulnerabilities.

January 2026: The Scandal Goes Public

January 18, 2026: Politico reports that Gottumukkala attempted to remove CISA Chief Information Officer Robert Costello from his position. The reason for the attempted ouster is not publicly confirmed, but the timing—months after Costello participated in meetings about Gottumukkala's ChatGPT uploads—suggests potential retaliation against those involved in the security review.

January 27, 2026 (Tuesday): Politico publishes its investigation revealing that Gottumukkala uploaded sensitive CISA contracting files to a public ChatGPT instance, triggering automated security warnings. The story cites four unnamed Department of Homeland Security officials familiar with the matter. The documents were marked FOUO—a designation that prohibits public disclosure without authorization.

January 29, 2026 (Wednesday): CISA releases its insider threat guidance, including an infographic titled "Assembling a Multi-Disciplinary Insider Threat Management Team." The guidance urges critical infrastructure entities to establish teams including HR personnel, legal counsel, security and IT leadership, and threat analysts to monitor, detect, and intervene against insider threats.

In the press release, Gottumukkala himself provides the statement: "Insider threats remain one of the most serious challenges to organizational security because they can erode trust and disrupt critical operations."

The Register's analysis of the timing is blunt: "Maybe everything is all about timing, like the time (this week) America's lead cyber-defense agency sounded the alarm on insider threats after it came to light that its senior official uploaded sensitive documents to ChatGPT. Or maybe it's about hypocrisy."

The CISA Insider Threat Guidance: A Mirror Held Up to Leadership

CISA's January 29 guidance outlines comprehensive best practices for detecting, preventing, and mitigating insider threats. The recommendations read like a checklist of controls that—if applied to Gottumukkala himself—would have prevented or quickly contained his ChatGPT uploads:

What CISA Recommends Organizations Do

- Assemble Multi-Disciplinary Teams: Include subject-matter experts from HR, legal, security/IT leadership, and threat analysts. Coordinate with external partners including law enforcement and health professionals as needed.

- Monitor for Potential Threats: Implement technical controls and user behavior analytics to detect anomalous activity, unauthorized data access, and data exfiltration attempts.

- Intervene as Needed: Establish clear escalation paths and intervention protocols to prevent damage to people, data, reputation, and operations.

- Address Both Malicious and Unintentional Threats: CISA explicitly notes that insider threats take two forms—"calculated acts of harm" and "unintentional mistakes." The guidance states: "Malicious insiders may exploit access for personal gain or revenge, causing severe damage to systems and trust. At the same time, negligence or simple human errors can open the door to vulnerabilities that adversaries can exploit."

- Foster a Culture of Trust: According to CISA Executive Assistant Director for Infrastructure Security Steve Casapulla, organizations should "foster a culture of trust where employees feel empowered to report concerns and stop threats before they escalate."

How CISA's Own Leadership Violated Each Principle

Multi-Disciplinary Oversight Failed: While DHS monitoring systems detected Gottumukkala's ChatGPT uploads, the "temporary exception" he received to use the platform in the first place suggests insufficient multi-disciplinary review. Who approved the exception? What justification was provided? What safeguards were implemented? These questions remain unanswered.

Monitoring Worked—But Response Failed: The automated warnings triggered in August 2025 prove that technical monitoring controls functioned as designed. However, the lack of immediate consequences for Gottumukkala—who continued in his role for months after the detection—suggests that intervention protocols either don't exist or aren't enforced for senior leadership.

Retaliation Instead of Accountability: Rather than accepting accountability for his failed polygraph, Gottumukkala allegedly placed the six career staffers who administered the exam on leave. If proven, this represents the opposite of the "culture of trust" CISA recommends organizations build.

Leadership Exemption Culture: The "temporary exception" that granted Gottumukkala access to ChatGPT—despite DHS policy blocking the tool for other employees—exemplifies a dangerous pattern in federal cybersecurity: senior officials operate under different security rules than the workforce they lead. This two-tier system undermines the foundational principle of insider threat programs: no one is above monitoring.

What Gottumukkala Did: A Deep Dive into the Security Breach

The Documents

At least four documents marked "For Official Use Only" were uploaded to ChatGPT's public instance. While the specific content hasn't been publicly disclosed, FOUO documents in the contracting context typically include:

- Vendor selection criteria and evaluation processes

- Pricing information and budget allocations

- Technical specifications for IT systems and cybersecurity tools

- Organizational structure and personnel details

- Operational processes and timelines

- Risk assessments and vulnerability information

The FOUO designation—while not classified—exists specifically to protect information that could:

- Give competitors or adversaries operational intelligence

- Compromise procurement integrity

- Expose security measures or gaps

- Violate vendor confidentiality agreements

- Undermine trust in government contracting processes

How the Security Systems Caught It

According to the Politico report, "automated security warnings intended to stop the theft or unintentional disclosure of government material from federal networks" detected the uploads. These systems likely include:

Data Loss Prevention (DLP) Tools: Modern DLP solutions scan outbound network traffic for sensitive document markers, classification labels, and content patterns. When Gottumukkala uploaded FOUO-marked files to ChatGPT (which requires internet connectivity), the DLP system would have identified the FOUO designation and flagged the transmission.

Cloud Access Security Brokers (CASB): DHS likely employs CASB solutions that monitor all cloud application usage, including AI chatbots. When Gottumukkala accessed ChatGPT—even with his "temporary exception"—the CASB would log the session, monitor data uploads, and alert on sensitive content transmission.

User and Entity Behavior Analytics (UEBA): Advanced UEBA systems establish baseline behavior patterns for each user. Gottumukkala uploading government documents to an external AI service would deviate from normal activity, triggering behavioral anomaly alerts.

Network Traffic Analysis: Deep packet inspection and SSL/TLS decryption capabilities allow security teams to inspect encrypted traffic to ChatGPT's servers, identifying document uploads regardless of encryption.

The fact that "multiple security warnings" were triggered "during the first week" of August 2025 suggests that:

- Multiple security tools detected the activity independently (defense in depth working as designed)

- Gottumukkala made multiple uploads, not a single mistake

- The warnings were likely escalated to senior security personnel quickly

The "DHS Controls" Defense

CISA spokesperson Marci McCarthy told The Register that Gottumukkala "was granted permission to use ChatGPT with DHS controls in place" and that "this use was short-term and limited." This defense raises more questions than it answers:

What "DHS Controls" Applied? If DHS has an enterprise ChatGPT deployment with data retention protections, end-to-end encryption, and compliance features (similar to ChatGPT Enterprise), why did the uploads trigger security warnings designed to detect unauthorized data exfiltration? The warnings suggest Gottumukkala used the public version of ChatGPT, not an enterprise instance with government-specific protections.

Why Was an Exception Granted? ChatGPT is blocked by default for DHS employees specifically because of data exfiltration risks. What business justification warranted granting Gottumukkala—a senior cybersecurity official who should understand these risks better than anyone—an exception?

Who Authorized the Exception? The approval chain for security policy exceptions is critical for accountability. Was the exception approved by the CISO, the CIO, the General Counsel, or Gottumukkala's direct supervisor? Or did Gottumukkala approve his own exception?

What Were the Limits? McCarthy described the use as "short-term and limited," with the last use in "mid-July 2025." Yet the security warnings in August suggest ongoing activity. Was the exception still active when violations occurred? If so, why? If not, did Gottumukkala continue using ChatGPT after his authorization expired?

The Failed Polygraph

The counterintelligence polygraph failure in late July 2025—just weeks before or after the ChatGPT uploads—adds another layer of concern. Polygraph examinations for Controlled Access Programs typically probe:

- Foreign contacts and foreign travel

- Financial pressures and vulnerabilities

- Contacts with foreign intelligence services

- Unauthorized disclosure of classified or sensitive information

- Trustworthiness and reliability

While polygraphs are not foolproof and can produce false positives, they remain a standard counterintelligence screening tool for access to highly sensitive intelligence. The fact that Gottumukkala failed the exam, then allegedly retaliated against the examiners, suggests either:

- The polygraph accurately detected deception about security-relevant matters, or

- Gottumukkala's reaction to a failed exam (if the retaliation allegations are proven) demonstrates concerning judgment and accountability gaps

The CIO Ouster Attempt

In January 2026, Gottumukkala reportedly attempted to remove CISA CIO Robert Costello from his position. Costello was one of the officials Gottumukkala met with in August 2025 to discuss "the appropriate handling of sensitive material" after the ChatGPT uploads were detected.

If the ouster attempt was retaliatory—and the timing suggests it may have been—it represents a textbook example of the insider threat behavior CISA's own guidance warns against: using organizational authority to punish those who identify or report security violations.

The Broader Federal Pattern: A Culture of Executive Exemption

Gottumukkala's ChatGPT uploads are not an isolated incident. They are part of a disturbing pattern of senior federal officials—particularly in the current administration—violating cybersecurity protocols with apparent impunity:

Defense Secretary Pete Hegseth's Signal Scandal

In March-April 2025, multiple news organizations reported that Secretary of Defense Pete Hegseth:

Installed an Insecure "Dirty" Internet Line: Hegseth reportedly installed an unauthorized internet connection in his Pentagon office specifically to bypass network security controls that prevented him from using Signal, an encrypted messaging app, on government networks. The Associated Press reported that this "dirty line" lacked the security monitoring and controls applied to official Pentagon networks.

Used Personal Devices for Military Operations: The Washington Post reported that Hegseth used Signal on his personal phone to share sensitive details about military operations in Yemen. The Register described this as an "Atlantic security disaster," noting that operations conducted via personal devices lack audit trails, data retention for oversight, and protection against foreign interception.

Participated in Multiple Signal Group Chats: Politico reported that Hegseth participated in at least 20 Signal group chats used by National Security Council staff to coordinate crisis responses "across the world." These chats bypassed official communication channels, creating gaps in federal records and oversight.

National Security Advisor Michael Waltz's Gmail Usage

In April 2025, The Register reported that National Security Advisor Michael Waltz and other members of the U.S. National Security Council used personal Gmail accounts to exchange information about an unnamed military conflict. This practice violates:

- Federal Records Act: Government business must be conducted on official systems to ensure record retention and FOIA compliance

- Classification Requirements: Classified information cannot be transmitted via commercial email services

- Operational Security: Personal email accounts are prime targets for foreign intelligence services (as demonstrated by Russia's 2016 hack of Clinton campaign chairman John Podesta's personal Gmail account)

Pattern Recognition: Leadership Exemption Culture

These incidents reveal three common threads:

1. Convenience Over Security: Senior officials consistently prioritize personal convenience (using familiar apps like Signal, ChatGPT, and Gmail) over security protocols. The excuse is often that official systems are "too cumbersome" or "don't support modern tools"—but these restrictions exist for documented security reasons.

2. Special Exception Syndrome: Rather than using official secure alternatives (such as DHS's internal AI chatbot or the Pentagon's classified messaging systems), senior officials demand and receive "temporary exceptions" to security policies that apply to everyone else. This two-tier system undermines security culture and sends a clear message: rules are for the rank-and-file, not for leadership.

3. Accountability Gaps: Despite public exposure, none of these officials has faced meaningful consequences. Gottumukkala remains CISA's acting director. Hegseth remains Secretary of Defense. Waltz remains National Security Advisor. The message to the federal workforce: security violations by senior officials are acceptable; security violations by junior employees are career-ending.

This pattern directly contradicts CISA's own cybersecurity principles, which emphasize that security must be a leadership priority and that accountability must apply uniformly.

The AI Agent Insider Threat: ChatGPT as a Data Exfiltration Vector

Gottumukkala's ChatGPT uploads exemplify a rapidly growing insider threat category that security researchers have been warning about since late 2022: AI-assisted data exfiltration.

How ChatGPT Becomes an Insider Threat

Data Training and Retention: When users upload documents to ChatGPT's public version (without Enterprise privacy settings), that data may be used to train OpenAI's language models. While OpenAI's privacy policy states that users can opt out of training data usage, the default setting allows it. Even with opt-out enabled, data is retained for 30 days for abuse monitoring. For government and corporate sensitive information, even temporary retention on external servers constitutes a security violation.

Unintended Disclosure: Users often paste sensitive information into ChatGPT prompts without realizing they're uploading it to OpenAI's servers. A 2024 survey by LayerX found that 77% of employees paste company data into AI tools, often including customer records, financial data, source code, and strategic plans. The same study found that 82% use personal accounts (not enterprise versions with data protection), dramatically increasing exfiltration risk.

Prompt Injection and Data Extraction: Adversaries can use prompt injection attacks to extract information other users have uploaded. For example, if an attacker knows a specific document or data type was uploaded to ChatGPT, they can craft prompts designed to trick the model into revealing that information. While OpenAI has implemented safeguards, researchers continue to demonstrate successful injection attacks.

Third-Party Access: OpenAI's plugin ecosystem and API partnerships mean that data uploaded to ChatGPT may be accessed by third-party applications. While enterprise deployments can restrict this, public ChatGPT users have limited visibility into how their data flows through OpenAI's partner network.

The Scale of the Problem

The ChatGPT insider threat extends far beyond one CISA director:

Samsung Trade Secret Leak (2023): Samsung employees uploaded proprietary source code and internal meeting notes to ChatGPT, prompting the company to ban the tool entirely. The incident involved at least three separate employees in the span of a month, suggesting widespread use for work-related tasks.

Amazon Employee Usage (2023): Internal Amazon documents revealed that lawyers warned employees against using ChatGPT because it could create "trade secret disclosures" and violate confidentiality agreements with customers. Despite the warnings, employee usage continued.

Banking Sector Concerns (2023-2024): Major banks including JPMorgan Chase, Goldman Sachs, Bank of America, Citigroup, and Wells Fargo restricted or banned employee access to ChatGPT due to concerns about exposing customer data, trading strategies, and regulatory compliance information.

Microsoft Copilot Data Leaks (2024-2025): As Microsoft 365 Copilot rolled out to enterprises, security researchers documented cases of the AI assistant inadvertently exposing sensitive emails, chat messages, and documents to users who shouldn't have access—a phenomenon dubbed "oversharing."

Corporate vs. Federal Guidance

The corporate sector has largely addressed the AI data exfiltration threat through a combination of:

- DLP Integration: Modern DLP tools include ChatGPT and other AI chatbots in their monitoring scope, scanning prompts for sensitive data before transmission

- Enterprise AI Deployments: Organizations deploy ChatGPT Enterprise, Azure OpenAI, or private LLM instances with data retention controls, encryption, and compliance features

- Acceptable Use Policies: Clear policies specify which AI tools are approved, what data can be shared, and consequences for violations

- Security Awareness Training: Regular training includes AI-specific scenarios and risks

Yet CISA—the federal agency responsible for critical infrastructure cybersecurity—apparently granted its acting director a "temporary exception" to use the public version of ChatGPT without adequate safeguards. This decision violates the very principles CISA advocates.

What CISOs Should Learn: Turning Scandal into Strategy

While the CISA incident is embarrassing for federal cybersecurity leadership, it provides valuable lessons for CISOs navigating the AI threat landscape:

1. Implement AI-Specific Data Loss Prevention

Deploy DLP for AI Tools: Extend your DLP policies to monitor all major AI chatbots (ChatGPT, Claude, Google Bard, Microsoft Copilot, etc.). Configure rules to detect:

- Document uploads containing classification markers (FOUO, Confidential, Internal Use Only, etc.)

- Pastes containing customer data, PII, financial information, or source code

- Prompts including email addresses, IP addresses, API keys, or credentials

- Volume-based anomalies (e.g., 100+ paste operations in a single session)

Real-Time Blocking: Configure DLP to block—not just alert—when sensitive data is detected in AI prompts. The few seconds of friction are worth preventing data exfiltration.

AI Tool Inventory: Maintain a current inventory of all AI tools your employees can access. Shadow AI (unapproved tools) poses the same risk as shadow IT.

Example DLP Rule:

IF content matches pattern:

[FOUO|Confidential|Internal Only|Customer Data]

AND destination matches:

[openai.com|claude.ai|bard.google.com]

THEN action:

BLOCK + ALERT security team + LOG for investigation

2. Deploy User and Entity Behavior Analytics (UEBA)

Establish Baselines: Modern UEBA solutions learn normal behavior patterns for each user—which applications they access, how much data they download, which file types they work with, and when they work.

Detect Anomalies: When a user suddenly starts uploading dozens of documents to a new AI tool, downloading sensitive repositories, or accessing systems outside their role, UEBA flags it for investigation.

Apply to All Users—Especially Leadership: The Gottumukkala case proves that senior leaders pose insider threat risks. Ensure UEBA applies to executives, not just rank-and-file employees.

Automated Response: Configure UEBA to automatically trigger step-up authentication, session recording, or temporary access restrictions when high-risk behavior is detected.

3. Build a Comprehensive Insider Threat Program

Follow CISA's own guidance (ironically, it's sound advice):

Multi-Disciplinary Team: Include HR, Legal, Security, IT, and Threat Intelligence. Each brings a unique perspective:

- HR: Identify employees under stress, facing termination, or exhibiting behavioral changes

- Legal: Ensure investigations comply with employment law and privacy regulations

- Security: Deploy technical monitoring and incident response

- IT: Implement controls, manage access, and provide forensic support

- Threat Intel: Identify patterns consistent with espionage, theft, or external coercion

Threat Indicators: Monitor for:

- Attempts to access data outside normal job responsibilities

- Large-scale downloads or data transfers

- Use of personal email/cloud storage for work files

- VPN/remote access from unusual locations

- Failed login attempts to sensitive systems

- Attempts to disable logging or security tools

- Documented grievances, performance issues, or resignation

Intervention Protocols: Establish clear escalation paths:

- Low Risk: Automated security awareness reminder (e.g., "We noticed you uploaded a file to ChatGPT. Please use our approved enterprise AI tools instead.")

- Medium Risk: Manager notification + security interview

- High Risk: Immediate access restriction + forensic investigation + legal consultation

4. Enforce Executive Accountability

No Exemptions: Security policies must apply uniformly. If junior employees can't use ChatGPT, neither can the CEO. This isn't about hierarchy—it's about risk.

Leadership Modeling: Executives set the security culture. When they demand exceptions, bypass controls, or ignore policies, they signal that security is optional. Ensure leadership visibly complies with security measures.

Consequence Consistency: If an entry-level employee uploading FOUO documents to ChatGPT would face termination, the acting director of CISA should face equivalent consequences. Accountability must be position-agnostic.

Board-Level Reporting: Report executive security violations to the board or oversight body. Senior officials should not investigate themselves.

5. Deploy Privileged Access Management (PAM)

Just-In-Time Access: Rather than granting permanent elevated privileges, implement JIT access that grants admin rights only when needed for specific tasks, then automatically revokes them.

Session Recording: Record all privileged sessions—including those by senior executives. This creates an audit trail and deters misuse.

Break-Glass Procedures: Emergency access ("break glass in case of fire") should be logged, time-limited, and automatically reported to security leadership.

Separation of Duties: No single person—including the CISO or CIO—should be able to approve their own security policy exceptions, disable their own monitoring, or delete their own audit logs.

6. Implement Continuous Monitoring

Always-On Detection: Insider threats don't operate on a 9-to-5 schedule. Deploy 24/7 monitoring with automated alerting.

Cloud and SaaS Monitoring: As Gottumukkala's case demonstrates, insider threats increasingly involve cloud services and SaaS applications. Ensure monitoring covers:

- Shadow IT / unapproved cloud services

- Personal cloud storage (Dropbox, Google Drive, OneDrive)

- AI chatbots and productivity tools

- Collaboration platforms (Slack, Teams, Discord)

- Code repositories (GitHub, GitLab, Bitbucket)

Log Retention: Maintain sufficient log retention for investigations. The Gottumukkala investigation relied on logs from August 2025 reviewed in January 2026—five months of retention was critical for the case.

7. Educate on AI-Specific Risks

Update Security Awareness Training: Most security awareness programs focus on phishing, password security, and physical security. Add AI-specific modules:

- What happens to data uploaded to public AI tools

- Enterprise AI alternatives with data protection

- Prompt injection attacks and adversarial use of AI

- How to identify AI-generated phishing and deepfakes

Role-Based Training: Tailor training to roles:

- Developers: Using Copilot safely, avoiding source code leaks

- Executives: Executive-targeted AI attacks (deepfake video calls, voice cloning)

- HR/Finance: Using AI for sensitive employee/financial data

- Customer Service: AI chatbot security and customer data protection

Red Team Exercises: Conduct AI-focused red team exercises where you simulate:

- An employee using ChatGPT to "help" with a confidential merger

- An attacker using prompt injection to extract internal data

- A deepfake video call from the "CFO" requesting a wire transfer

8. Establish Clear AI Governance

Approved AI Tools: Maintain a list of approved AI tools with enterprise data protection:

- ChatGPT Enterprise (not public ChatGPT)

- Azure OpenAI Service

- Amazon Bedrock

- Google Cloud Vertex AI

- Self-hosted open-source models

AI Acceptable Use Policy: Define clearly:

- What types of data can be used with AI tools (and what cannot)

- Which AI tools are approved (and which are banned)

- Consequences for violations

- How to request exceptions (with documented approval chain)

Regular Audits: Audit AI tool usage quarterly:

- Which tools are employees using?

- What data are they sharing?

- Are approved enterprise versions being used, or public versions?

- Are any high-risk patterns emerging?

Conclusion: The Irony and What It Reveals

The greatest irony of the CISA ChatGPT scandal isn't just the timing—though publishing insider threat guidance 24 hours after being exposed for an insider threat is almost cartoonishly hypocritical. The deeper irony is that CISA's automated security systems worked exactly as designed. The DLP tools detected the uploads. The alerts fired. The logs captured the evidence. The multi-disciplinary team (security, legal, CIO) convened to assess the risk.

But then the process failed. Instead of consequences, there were exceptions. Instead of accountability, there were rationalizations. Instead of leadership modeling security culture, there was alleged retaliation against those who flagged the problem.

This failure extends beyond one acting director. It reflects a systemic challenge in federal cybersecurity: the gap between the guidance we publish and the culture we practice. CISA's insider threat recommendations are sound. The multi-disciplinary team approach works. The emphasis on both malicious and unintentional threats is correct. The technology (DLP, UEBA, automated monitoring) functions.

But none of that matters if leadership doesn't believe the rules apply to them.

Trust in Federal Cybersecurity Leadership

For CISOs in critical infrastructure sectors—energy, healthcare, finance, transportation—CISA's guidance has long been a trusted resource. But trust requires credibility, and credibility requires consistency between what we preach and what we practice.

When the acting director of the nation's cyber defense agency uploads sensitive documents to ChatGPT, fails a counterintelligence polygraph, allegedly retaliates against those who administered it, and faces no apparent consequences, it erodes trust in federal cybersecurity leadership. It raises uncomfortable questions:

- If CISA leadership doesn't follow its own guidance, why should critical infrastructure operators?

- If federal officials get "temporary exceptions" to security policies, are we really serious about insider threats?

- If executives can bypass controls while demanding that employees comply, what message does that send?

The private sector has learned these lessons—often through painful, expensive breaches. Major federal contractors have lost billions in contracts due to security failures. Banks fired executives for compliance violations. Tech companies banned AI tools until enterprise alternatives with data protection were deployed.

The federal government—and CISA specifically—should hold itself to the same standard it demands of critical infrastructure operators.

Practical Takeaways

For CISOs, this scandal provides a clear roadmap:

- Deploy AI-specific DLP to monitor ChatGPT, Claude, and all major AI chatbots

- Implement UEBA to detect anomalous behavior, especially by privileged users

- Build multi-disciplinary insider threat teams (as CISA recommends)

- Enforce policies uniformly—no executive exemptions

- Use enterprise AI tools with data protection, not public versions

- Monitor continuously, including cloud and SaaS applications

- Update security training to cover AI-specific risks and scenarios

- Establish AI governance with clear acceptable use policies

Most importantly: Model the behavior you expect. If you're the CISO, use the approved AI tools. If you're the CEO, comply with the access controls. If you're on the board, ask hard questions about executive compliance.

Security culture flows from the top. When leadership treats security as optional, everyone else will too. When leadership follows the same rules as everyone else—and faces the same consequences for violations—security becomes part of organizational DNA.

That's the lesson of the CISA ChatGPT scandal. Not that insider threats are serious (we already knew that). Not that AI tools pose data exfiltration risks (we already knew that too). But that hypocrisy is the enemy of security culture, and the most dangerous insider threat isn't malice—it's the executive who believes the rules don't apply to them.

References

- CISA Urges Critical Infrastructure Organizations to Take Action Against Insider Threats - CISA, January 29, 2026

- Assembling a Multi-Disciplinary Insider Threat Management Team - CISA Infographic, January 2026

- Trump's acting cyber chief uploaded sensitive files into a public version of ChatGPT - Politico, January 27, 2026

- Maybe CISA should take its own advice about insider threats hmmm? - The Register, January 29, 2026

- Acting CISA director failed a polygraph. Career staff are now under investigation - Politico, December 21, 2025

- Acting CISA chief sought ouster of agency's chief information officer - Politico, January 18, 2026

- Madhu Gottumukkala - Wikipedia

- 13 Years Later: How the Federal Government Ignored a Cybersecurity Warning - Breached Company

- Treasury Department Terminates All Contracts with Booz Allen Hamilton - Breached Company

- Data Privacy Week 2026: Why 77% of Employees Are Leaking Corporate Data Through AI Tools - Breached Company (Draft)